Companies approach artificial intelligence with both interest and caution. AI promises automation and new ways to interact with enterprise data, yet organizations often hesitate before connecting these systems to operational environments. Concerns about hallucinations, exposure of sensitive data, and uncontrolled automation emerge quickly once AI moves beyond experimentation.

In many organizations, these concerns center on how AI models interact with enterprise systems. Once AI begins accessing databases, APIs, documents, and internal applications, the architecture of those integrations determines whether the system remains reliable, maintainable, and secure.

The Model Context Protocol (MCP) emerged as one approach to structuring these interactions. By separating model reasoning from system access, applications that implement this protocol - also commonly known as MCPs - limit the blast radius of potential model errors or prompt injections. Acting as connectors, these applications can enforce access controls, audit logs, and data masking, reducing the risks associated with models interacting with sensitive enterprise data.

To understand why this architectural structure becomes necessary, it helps to look at how enterprise AI systems first evolved.

Early enterprise AI systems addressed a basic limitation: access to company knowledge. Models trained on general datasets don’t include operational information such as internal documentation, reports, or structured/unstructured datasets stored in databases and data lakes.

Retrieval-Augmented Generation (RAG) introduced a practical solution. By retrieving relevant documents at runtime and incorporating them into the model’s context, organizations grounded AI responses in internal data rather than relying solely on training data. This approach enabled assistants to search internal documentation, summarize policies, and retrieve insights from enterprise knowledge bases.

While RAG solved the knowledge access problem, enterprise AI systems soon faced a broader challenge. AI agents began querying databases, interacting with APIs, and coordinating actions across enterprise systems. Organizations also started using multiple models for different tasks, including reasoning, document analysis, and image generation.

In many early implementations, these capabilities lived inside the same application layer that orchestrated prompts and retrieval pipelines. This approach enabled rapid experimentation, but each new integration introduced dependencies among the model layer, application code, and enterprise infrastructure. Over time, the integration layer became the most complex part of the system.

The Model Context Protocol (MCP) provides a way to organize these interactions. Instead of embedding integrations into prompts or application logic, MCP allows AI agents to interact in real time with external systems, acting as connectors that represent defined capabilities. These connectors encapsulate the logic required to access enterprise systems, execute queries, or trigger operations while keeping those integrations separate from the model’s reasoning layer.

In this architecture, the model focuses on reasoning while MCP connectors execute actions in external systems such as databases, APIs, or document services. This separation creates a modular structure where context retrieval, system interaction, and model reasoning operate as distinct layers.

The result is a system that evolves more easily. Connectors interacting with enterprise systems can be changed without affecting the agent’s reasoning logic, and models can be replaced without rewriting integrations.

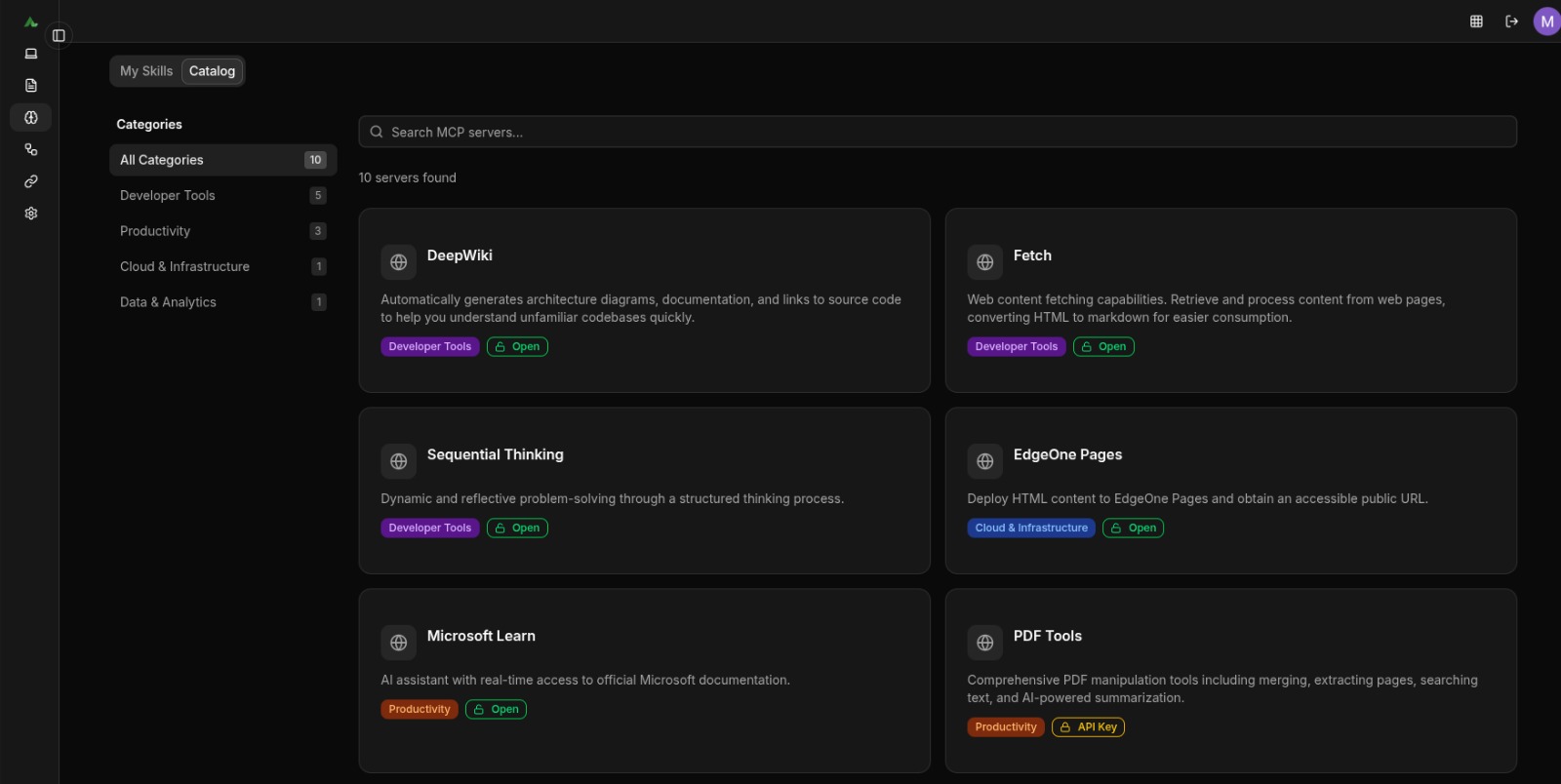

Within eSolutions, MCP integrations operate within the Alteus platform, which provides a centralized environment for organizations to launch and manage AI initiatives while connecting agents to enterprise data, tools, and models.

The platform includes an AI Agent Catalogue where agents configured for specific workflows can be deployed across departments. These agents combine language models and enterprise data access through MCP connectors that allow interaction with external systems.

In one example, an MCP connector was defined to interact with a healthcare data platform that uses Dremio as its data-exposure layer. Dremio provides a unified SQL-query interface across multiple underlying data sources, enabling the connector to efficiently query and retrieve data from diverse datasets within the organization.

Through this integration, AI agents can generate reports directly from the underlying datasets, significantly accelerating reporting workflows.

The same architecture also enables the creation of web applications where AI interfaces connect directly to enterprise data sources.

The Dremio integration illustrates how MCP connectors operate within the platform’s broader catalogue of integrations. Some of these connectors are built-in and generic, including RAG over internal knowledge bases, PDF tools for document manipulation, and web content retrieval capabilities.

Beyond these generic integrations, the platform supports adding proprietary MCP connectors defined using the JSON standard. This approach allows organizations to extend the system through integrations tailored to their internal environment without requiring extensive specialized development. These connectors can integrate enterprise systems such as custom internal applications, financial information systems, project management tools, or internal databases. Because these capabilities exist as connectors rather than embedded logic, the platform remains adaptable as models and tools evolve.

A similar pattern can support operational environments such as call centers. In this scenario, an MCP connector provides agents with controlled access to internal knowledge sources and operational procedures. The system retrieves relevant information from call center documentation and presents it to the operator during customer interactions, enabling faster access to accurate guidance without requiring manual searches across internal systems.

Another common use case is email triage and routing. An MCP connector integrates with the email system to securely read inbound messages and metadata. The agent classifies subject and intent (e.g., support, invoicing, onboarding, complaints, security) and routes the email or triggers the next step: creating a ticket, drafting a reply for approval, or escalating high-risk messages, while keeping access and actions governed through the connector layer.

As organizations integrate AI into operational workflows, architecture determines whether these systems remain maintainable. Models continue to evolve, data sources expand, and new tools appear regularly.

Protocols such as MCP support a modular integration model where reasoning, system interaction, and orchestration operate as separate layers. This structure allows AI systems to evolve gradually while maintaining control over how models interact with enterprise infrastructure.

As companies begin using AI in real systems, much of the challenge lies in how those integrations are designed, particularly as models begin interacting with enterprise infrastructure. Addressing these architectural questions early can make the difference between a scalable, secure AI deployment and one that introduces risk or technical debt.

***

Ready to scale your AI securely? Contact us to discuss your integration needs and build a robust AI ecosystem.

Emil Calofir is the Head of AI at eSolutions, where he leads the integration of AI solutions across a range of projects, with a strong focus on Generative AI and building practical, scalable capabilities that support business needs. With a software architecture background and broad industry exposure, he helps teams translate complex requirements into robust, production-ready systems.