In this article, I'm going to show you how our incredible team of data engineers builds a modern and AI-ready data platform from scratch. We will explore how we start with a small dataset and gradually build a reporting-ready data lake and a fully modular data platform.

Before we start, let's talk about data lakes versus data platforms.

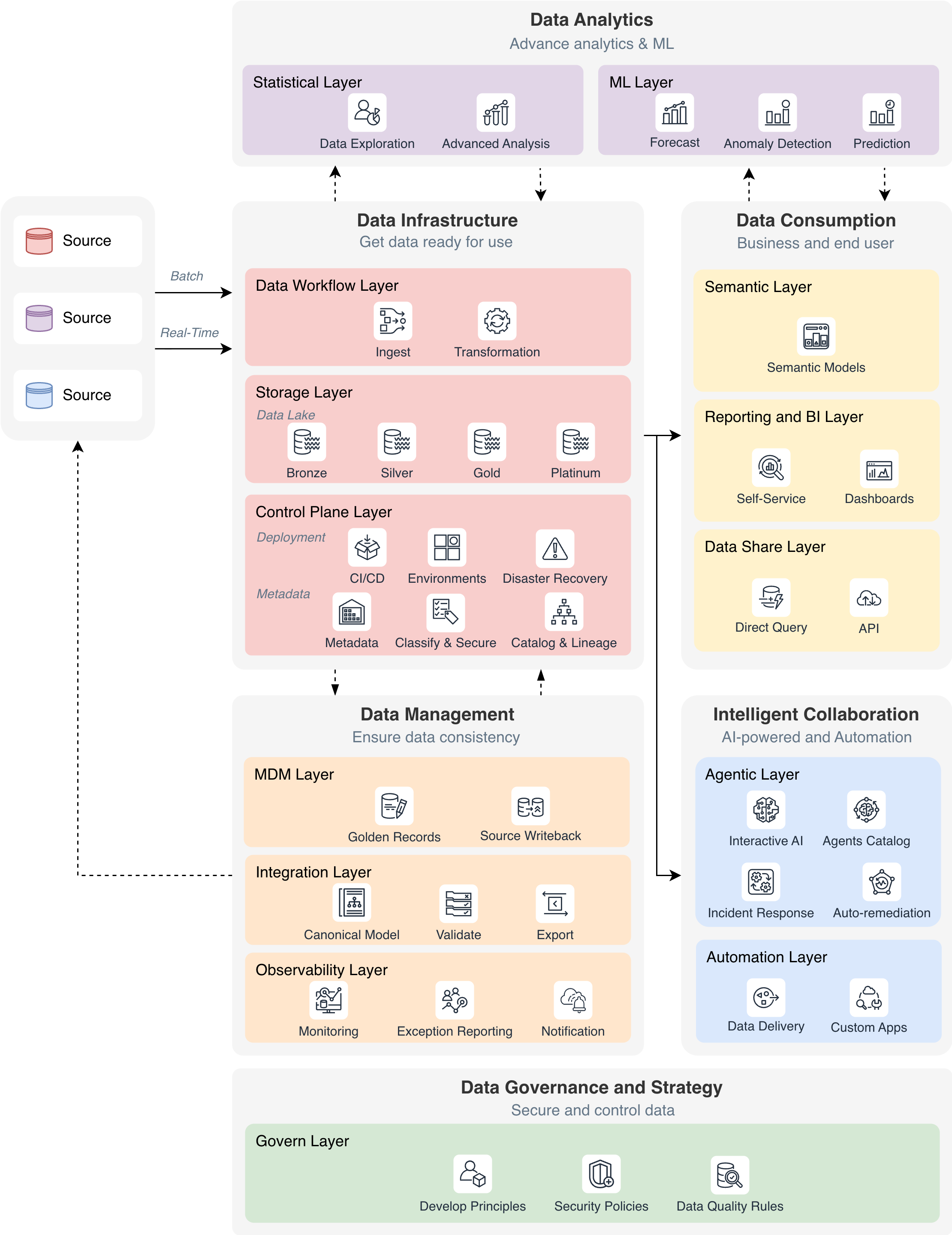

A Data Lake is fundamentally a storage platform where raw data from various sources is collected and stored, primarily focused on consolidating data, improving data quality and enabling reporting, business intelligence and accessibility.

A Data Platform, however, represents an end-to-end ecosystem that goes far beyond simple storage. It covers the entire data lifecycle and creates a trusted, scalable foundation for analytics, automation, and AI readiness.

Building a modern data platform is a progressive process, not a one-off task. Let's take a look into the five phases when building such a platform.

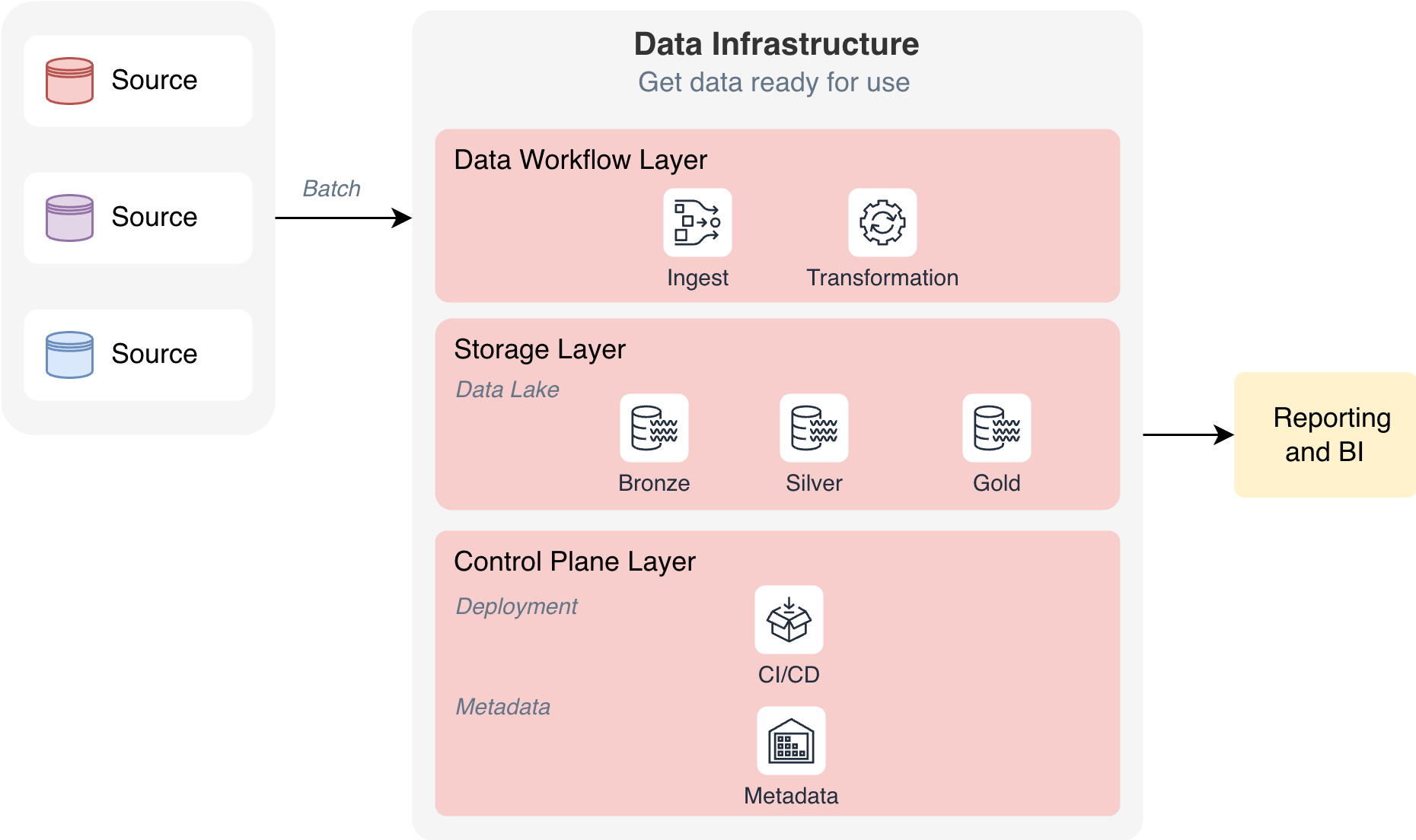

Our data journey begins with establishing the foundational Data Infrastructure component, designed to transform raw data into reporting-ready assets. The main objectives of this phase are to build a single source of truth, accelerate reporting capabilities, and reduce pressure on operational data source systems by centralizing data processing and storage.

This component consists of three essential layers:

This foundational phase establishes the core data processing capabilities that enable organizations to move from disparate, siloed data sources to a unified, analytics-ready data repository.

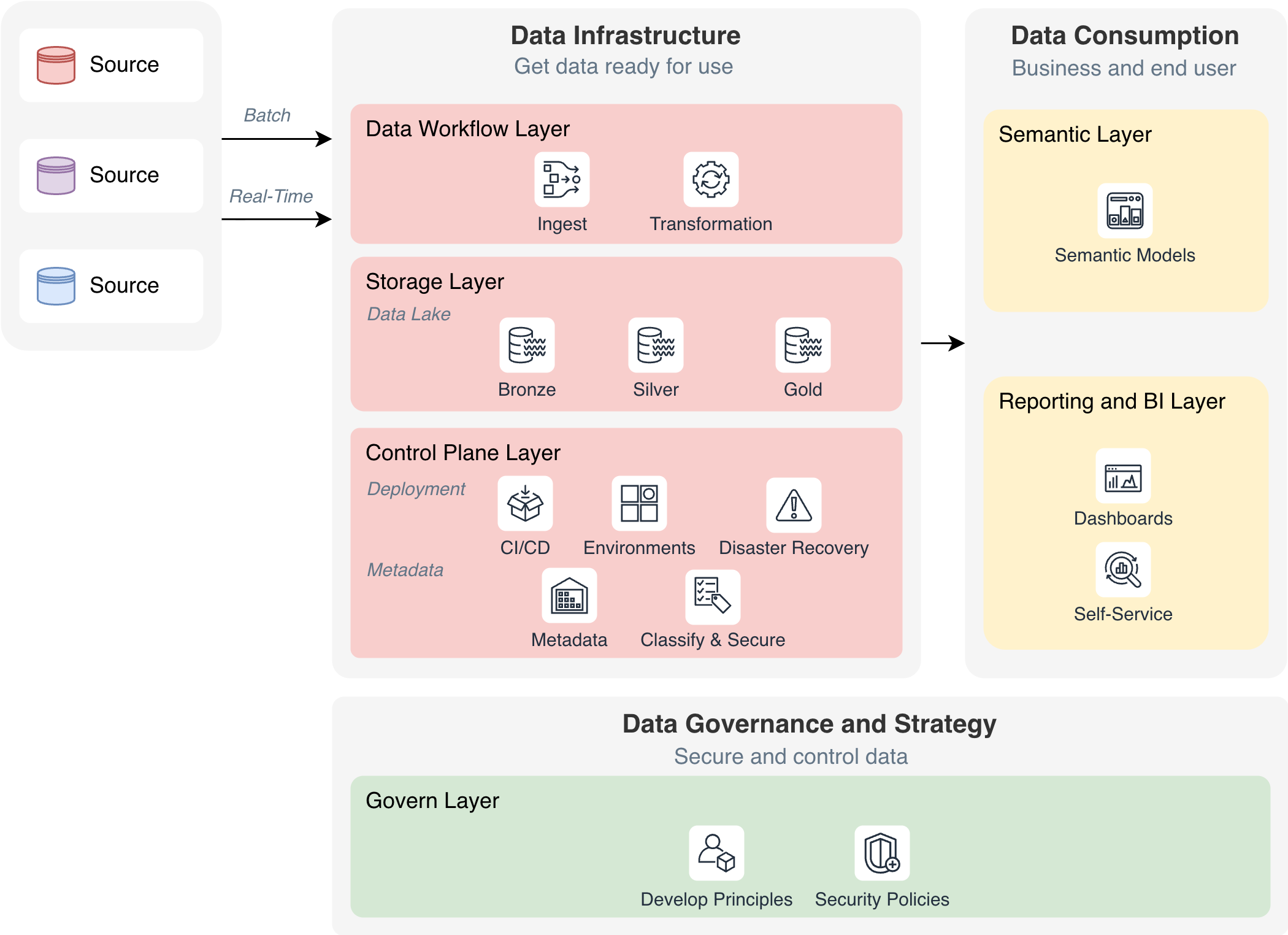

In the second phase, the data platform evolves from simply preparing data for reporting to making that data highly accessible, consistently fresh, and governed by strong security and compliance standards. This is where the platform becomes reliable not only for analysts and BI teams but also for a broader range of business users who expect reliable, trustworthy, and well-structured information.

The introduction of real-time ingestion and improved data orchestration significantly reduces latency, allowing the business to rely on more up-to-date insights. At the same time, semantic models simplify access to data by providing a unified, business-friendly layer that shields users from technical complexity and ensures consistent definitions across the organization.

Security and compliance become core priorities at this stage. The platform enforces robust access policies and ensures that data is protected and handled according to industry best practices and regulatory requirements.

Together, these capabilities transform the platform into a secure, accessible, and business-aligned environment, ready to support broader self-service analytics and more advanced use cases.

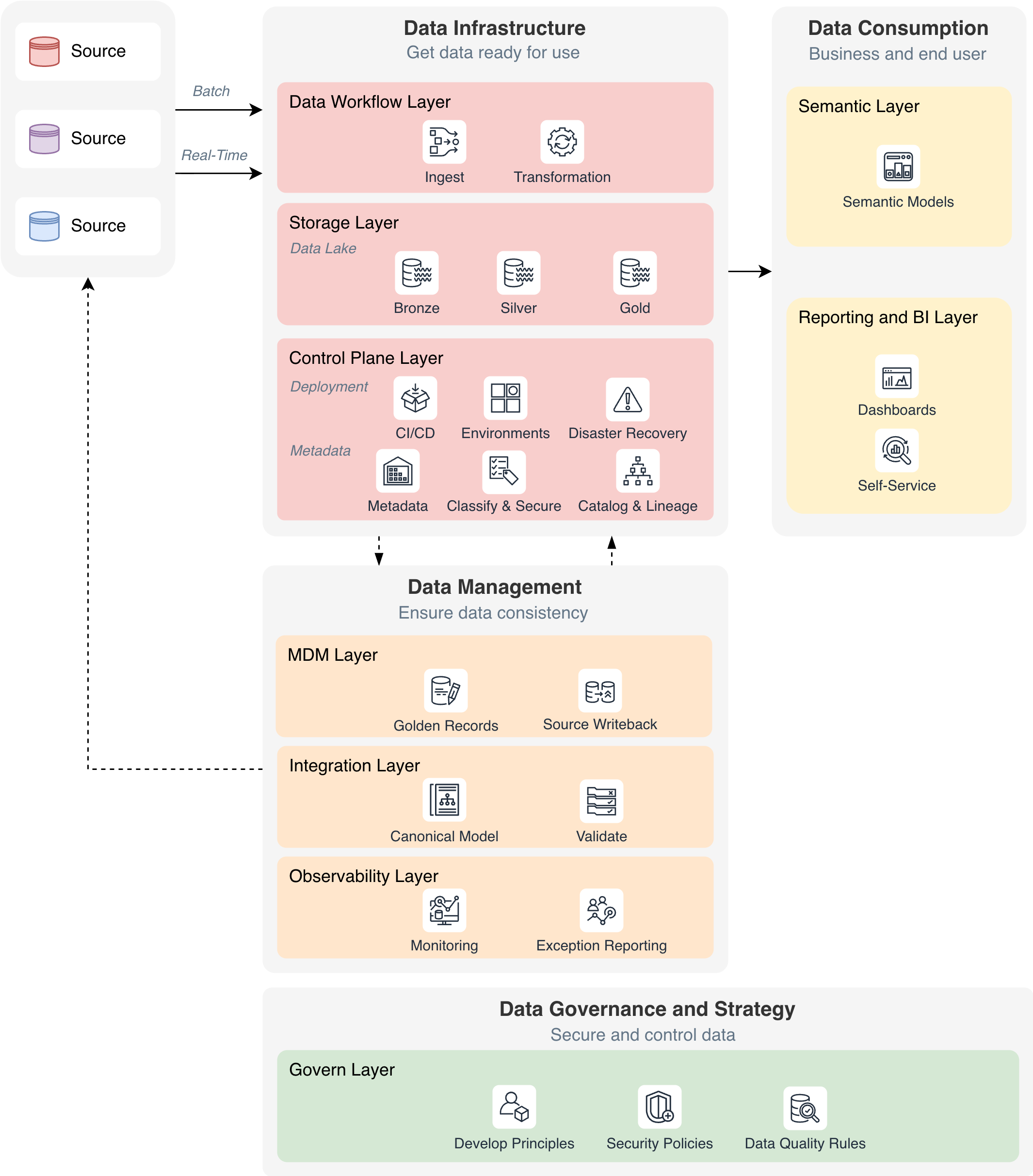

In this phase, the platform evolves into a reliable, consistent, and well-managed system. The focus shifts from simply moving and securing data to making sure it can be trusted in the long-term. This includes creating golden records through master data management, enforcing canonical models and validation rules across systems, and introducing full observability in order to detect and resolve issues proactively.

The result is a platform where data can be trusted, where errors are visible and traceable, and where every dataset follows strict and clearly defined rules.

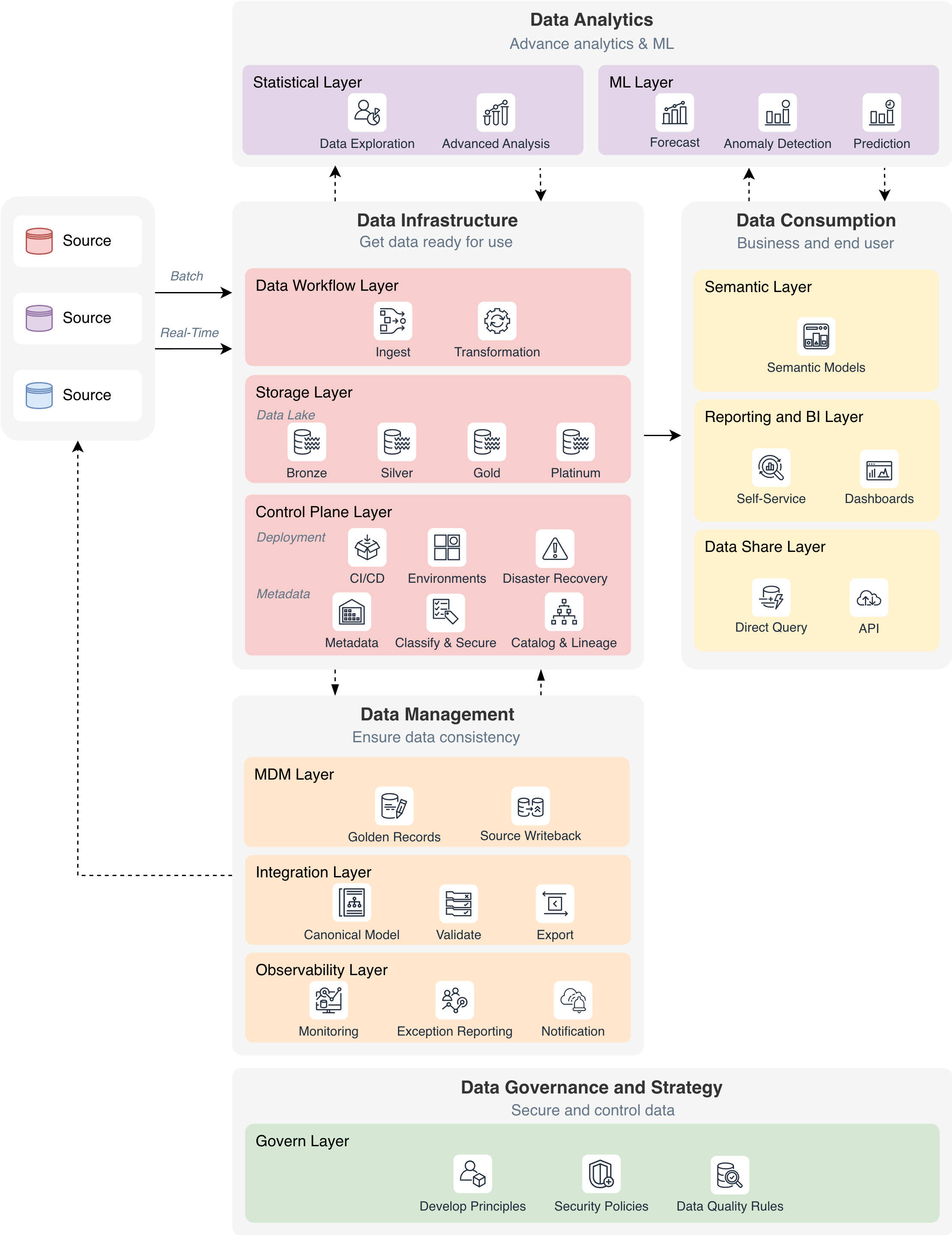

At this stage, the data platform becomes one place where you can find all your business information. It supports not just business intelligence, but also advanced analytics, quick experimentation, and machine learning projects that can be adjusted to suit your needs. Data scientists, analysts, and domain experts now have easier access to high-quality, well-documented datasets, which means it takes less time to get from exploring to using the data. They can explore new ideas quickly, try out different experiments, and use machine learning models in the same way that they use other data workflows.

The platform also encourages true cross-functional collaboration. Many teams can work together on the same data, information, and software without any problems. Robust versioning, access control and governance mechanisms ensure that innovation happens quickly without sacrificing data integrity or trust.

The main focus of this phase is to extract real business value from the data and make that data accessible to external systems. The platform scales analytics both across business verticals and functional teams, enabling deeper insights, specialized analysis, and cross-team collaboration. This creates a flexible and robust foundation for advanced analytics, operational intelligence, and data-driven products.

In the final phase, the data platform evolves into an intelligent ecosystem where AI becomes an active participant in daily operations. The focus shifts from analytical insights to real-time decisioning, automation, and conversational interaction. Generative AI capabilities enable teams to explore data, design workflows, and perform complex analysis through natural language prompts, removing the technical barriers that once limited access to data.

AI agents become a core component of the platform, providing a catalog of specialized capabilities such as incident detection, automated troubleshooting, and self-healing responses. These agents continuously monitor pipelines, infrastructure, and data quality, reacting instantly to anomalies and preventing issues before they impact the business. This unlocks a new degree of operational resilience and efficiency.

The platform also enhances collaboration by enabling intelligent, cross-team interactions. Business users, engineers, and analysts can interact with data and automation services conversationally, relying on AI to translate intent into actions. At the same time, the Data Share Layer ensures that the platform remains open and interoperable, exposing data through direct-query endpoints, APIs, and export mechanisms aligned with predefined integration contracts.

With AI fully integrated into the platform’s fabric, organizations can scale automation, accelerate innovation, and empower every team to operate with greater speed, precision, and intelligence.

Building a modern data platform is not a single project but a progressive journey through five transformative phases. Each phase lays the foundation for the next, gradually expanding capabilities, improving data quality, and unlocking new forms of business value. As the platform matures, it evolves from simply collecting data into a more advanced, intelligent ecosystem that supports analytics, automation, and AI-driven operations.

The journey begins with establishing the fundamental data infrastructure and delivering reliable reporting. It continues by improving accessibility, governance, and data consistency. From there, the platform expands into advanced analytics and multi-team collaboration. In the later stages, AI agents, automation, and natural-language interaction become integrated into daily workflows, turning the platform into an active participant in decision-making and operations.

Together, these phases illustrate the complete journey of data maturity, progressing through five key stages of value:

By progressing through these stages, organizations build a robust, intelligent data platform that evolves from simply describing the past to autonomously driving business actions, unlocking innovation, operational efficiency, and a sustained competitive advantage.

Lucian Neghina, Head of Data Engineering at eSolutions, is a leading expert in data strategy, modern data architectures, and building scalable data platforms, whether cloud-based or on-premises. He is experienced in delivering complete end-to-end data solutions, leading and mentoring cross-functional teams, establishing best practices, and promoting collaboration, innovation, and continuous improvement.