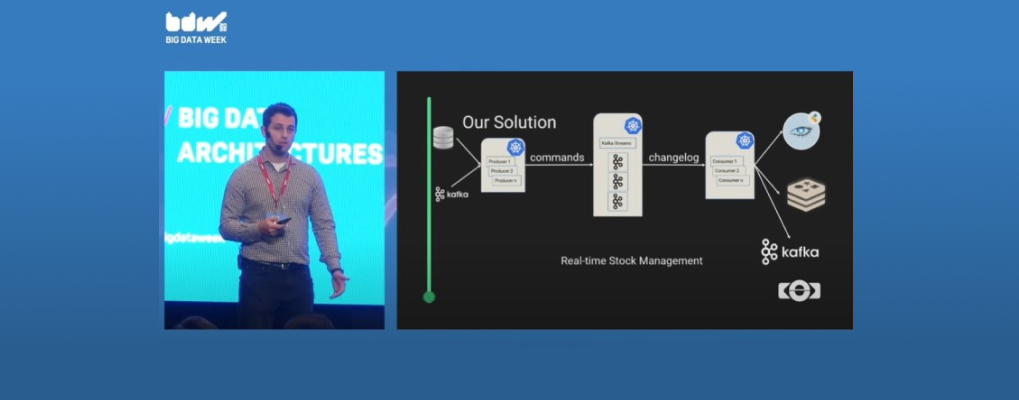

How to design a streaming data processing pipeline? Viorel Bibiloiu, Big Data Architect at eSolutions, presented our solution for designing a real-time stock management system for a large retailer using Kafka Streams, Kubernetes, and Cassandra on the Big Data Architectures Stage at this year’s edition of Big Data Week Bucharest Conference 2022.

Which are the retailers’ main pain points from a business standpoint? Having too much stock, having too little stock, as well as reacting slowly to the market changes are all problems that Viorel mentioned from the retail industry. To acquire near real-time visibility into the inventory and sales streams, we need to use streaming pipelines.

The streaming data processing pipelines could not exist without a big data platform on which to run. The platform implemented by eSolutions increases performance and process scalability, has a high availability to perform daily routine activities and facilitates the access to data for the entire ecosystem of apps and IT systems. This platform supports product lifecycle automation through integrated technologies and increases the speed at which changes can be made, therefore contributing to the optimization of operations by decreasing data processing time.

Going through the presentation you will see some basic types of processing streaming data, such as:

During his speech at BDW Bucharest Conference 2022, Viorel showed that a real life use-case is actually a combination of multiple basic types of streaming pipelines, at the end providing a comparison between Apache Spark Structured Streaming and Kafka Streams.

Watch his session in full to learn more:

Ready to learn more about how custom software products can benefit your business? Contact us today to schedule a consultation and start exploring your options!