In modern architectures, like microservices, communication between services is done using messaging systems. When we design a system, and we want to use a messaging system, then the following questions must be considered:

In this article, we will answer these two questions in the case of Apache Kafka. Apache Kafka supports 3 types of message delivery guarantees: at most once, at least once, exactly once. It is important to choose what guarantee we need from the beginning because this choice will influence the configuration of our producers and consumers and also the performance that Kafka can provide.

Let's see what this means:

You may be wondering why does Kafka provide these guarantees? Why not choose exactly once all the time? In the real world, every component of a distributed system can fail anytime, like: producer fails, consumer fails, messaging system fails, network fails, and so on. When one or more components fail, we must know if our system can tolerate the loss of messages or process the same message multiple times. When choosing the guarantee it is also important to remember that the performance will change. A stronger guarantee will impact the performance, or a weaker guarantee will increase the performance. For example, to ensure "exactly once guarantee", additional work is required.

Now that we know what guarantees Kafka can provide, let's have a quick look at how we can configure each guarantee and how this works.

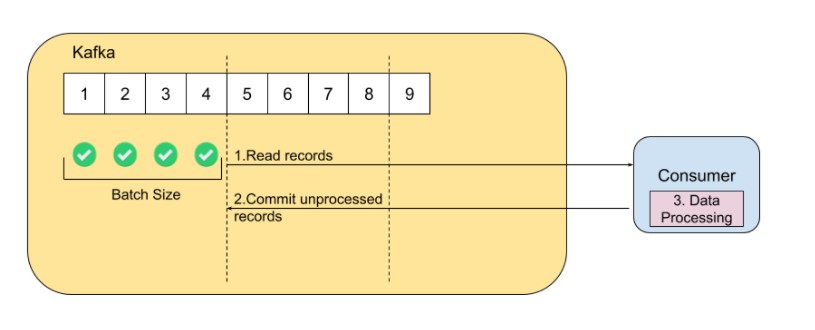

"At most once guarantee" means that a message is processed once or not at all. To set this guarantee, both consumer and producer must be configured. The consumer will receive messages from a Kafka topic and will commit them before the processing starts.

In case that the consumer fails, all unprocessed messages will be lost, because they are already committed. When a new instance of the consumer will start receiving messages, Kafka will send him the next uncommitted available messages.

In order to enable this guarantee, configure on the consumer site: "enable.auto.commit" property to "true".

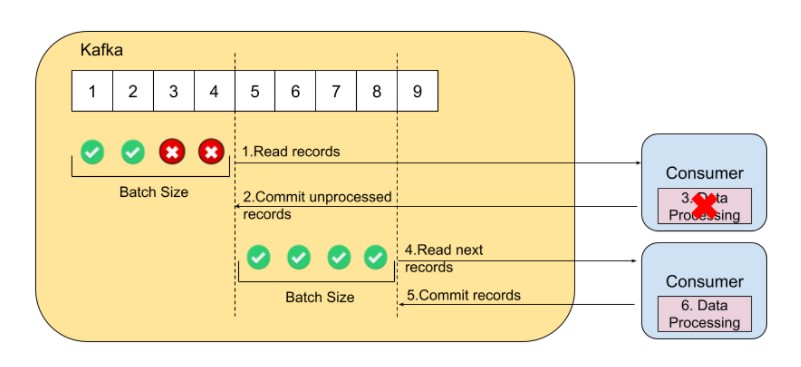

"At least once guarantee" means that you will definitely receive and process every message, but you may process some messages additional times in the case of a failure.

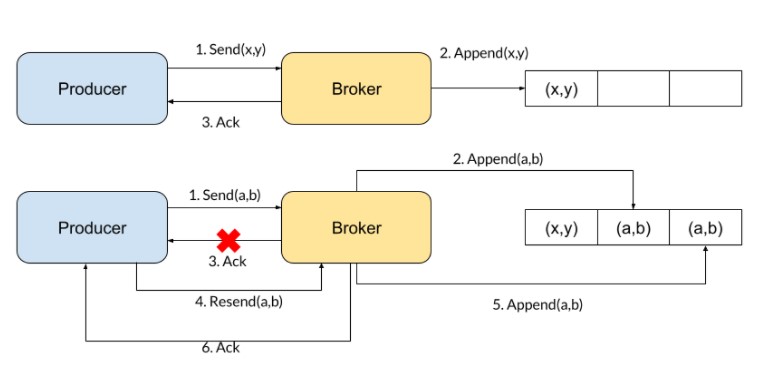

To ensure this guarantee, the producer must wait for acknowledgment from the Kafka broker before writing the next message on Kafka. If the message is not written on the topic because there is a network problem or the broker failed, the producer will retry until the write will succeed. Some messages on a Kafka topic can be duplicated because the producer writes messages in batches and if that write failed and the producer retried to write, some messages within the batch may be written more than once on Kafka.

Compared to the "at most once guarantee", the consumer will commit messages only after they are processed. In case that the consumer fails, the new instance of the consumer will read failed messages and unprocessed messages. Even if the consumer commits the messages after they are processed, it is possible that a network problem occurs or the broker fails.

To enable the "at least once guarantee" set "ack" property to value "1" on the producer side and "enable.auto.commit" property to value "false" on the consumer side.

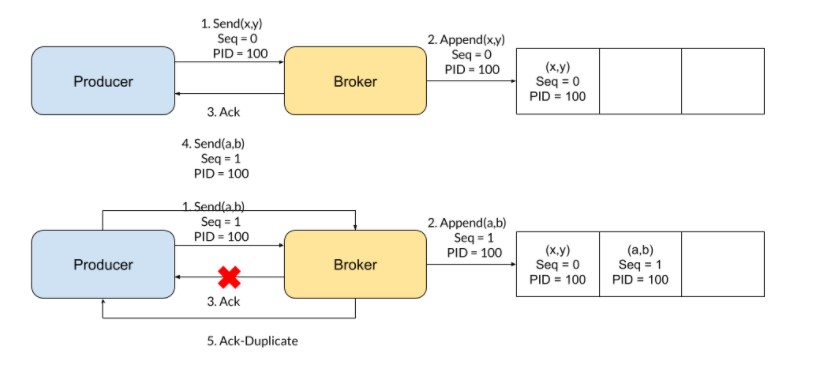

To avoid message duplication, Kafka added a new feature: idempotent producer.

When the idempotent producer feature is enabled, the producer will attach to a message a sequence number and the producer id. Using the sequence number and the producer id, the broker can identify if a message was already written on a topic. To enable the idempotent producer feature, add the property "enable.idempotence" with the value "true".

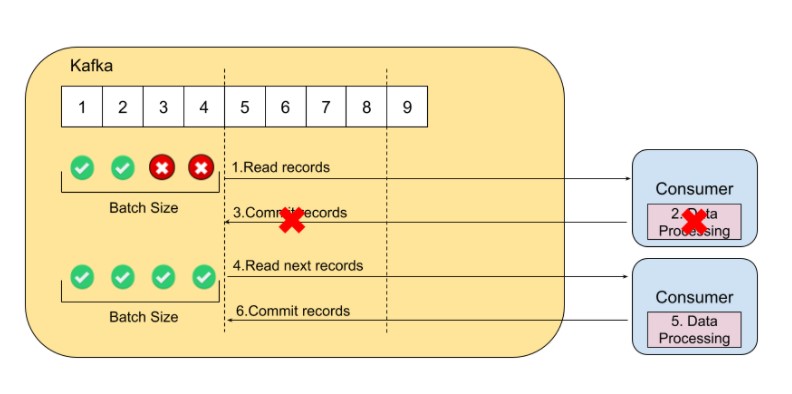

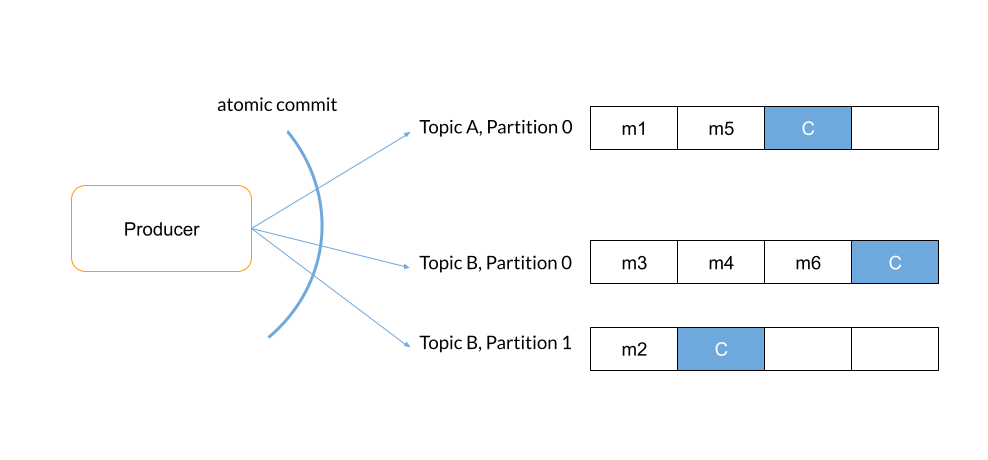

"Exactly once guarantee" means that a message is processed once, and only once. To ensure that a message is processed once and only once, Kafka added a new feature, Kafka Transactions. Transactions are useful only when the result of the processing is written to another Kafka topic.

When a producer writes messages within a transaction, the producer will write one or multiple messages on the Kafka topics. A transaction can be spread across multiple topics. After the write succeeds, to end the transaction, the producer will write a commit marker, a special internal message that will be used further by the consumer. Also, to abort a transaction, the producer will write an abort marker.

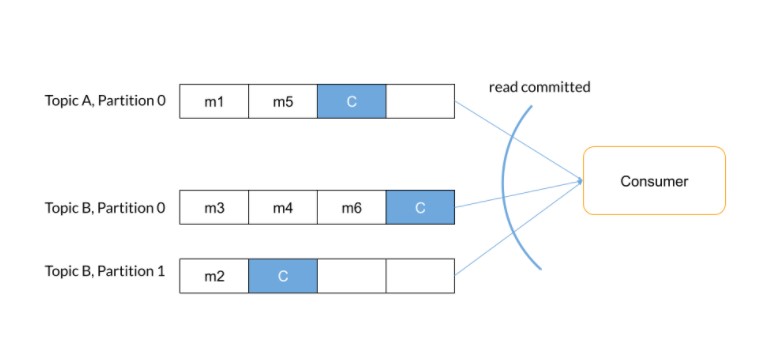

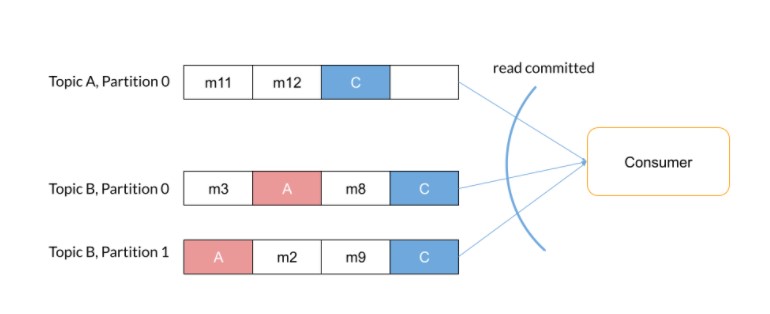

Once the messages are written on the Kafka topics, the consumer will read the messages and will process them only and only when the commit marker will appear. In the case of the abort marker, all messages from that transaction will not be processed.

Putting "exactly once guarantee" into practice requires enabling the idempotent producer feature and transaction feature on the producer side and transaction feature on the consumer side. Idempotent producer feature and transaction feature on the producer side can be enabled using the property "transactional.id" that must have a unique value for each producer instance. On the consumer side, to force the consumer to read only the committed messages within a transaction, set the property "isolation.level" with the value "read_committed".

In this article we discussed Kafka message delivery guarantees and provided some details on the configuration that must be set up on the application side. If you're not sure what guarantee you need for your use cases or need help setting it up, we can gladly help.

In a further article, we will discuss the appropriate use cases of these three guarantees.

Mihai Ghita is a Data Engineer at eSolutions, describing himself as an enthusiastic and curious person. Mihai has always been passionate about technology and during the last years, he specialized in Big Data technologies, such as Kafka, Spark, and other streaming processing tools, also holding a Certificate as a Google Cloud Professional Data Engineer.

Got a question or need advice? We're just one click away.